I Fact-Checked My Own Research Paper Before Writing It (You Should Too)

Here's a workflow tip that's either going to save your credibility or confirm that I have an unhealthy relationship with spreadsheets: before you write anything that makes factual claims, build an evidence inventory first.

Not a bibliography. Not a "sources" section at the bottom of a Google Doc. An actual structured inventory where every single factual claim in your paper, blog post, report, or conference talk is cataloged, mapped to a primary source, independently verified, and assigned a status. Verified. Partially verified. Unverified. Or the one that makes your stomach drop: incorrect.

I know this sounds like the kind of advice that belongs on a poster in a university writing center, sandwiched between "cite your sources" and "plagiarism is bad." But I'm not talking about academic hygiene. I'm talking about self-defense.

The Experiment: 49 Claims, One Paper, Zero Written Paragraphs

I'm in the middle of writing an analytical paper about the llms.txt ecosystem—the infrastructure paradox, the adoption data, the inference gap, the trust architecture, all of it. It's the kind of paper that makes heavy factual claims: "X% of websites adopted this standard," "Cloudflare began blocking AI crawlers by default in July 2025," "no major AI provider has confirmed inference-time usage."

Before I wrote a single paragraph, I extracted every factual claim from the paper's outline. All 49 of them. I put them in a structured inventory with columns for claim ID, the specific assertion, the source key from my references file, the verification status, and an evidence notes field for recording what I actually found versus what I expected to find.

Then I started verifying.

This is the part where you'd normally expect me to say, "And thankfully, everything checked out just fine and aligned perfectly with my desires." Obviously, that didn't happen. I'm a documentation-first developer with a Markdown compulsion and a growing suspicion that nobody verifies anything before they hit publish anymore. The verification process ate two full days. It involved reading the llms.txt reference parser's Python source code line by line. By the end, my inventory looked less like a spreadsheet and more like a conspiracy theorist's corkboard.

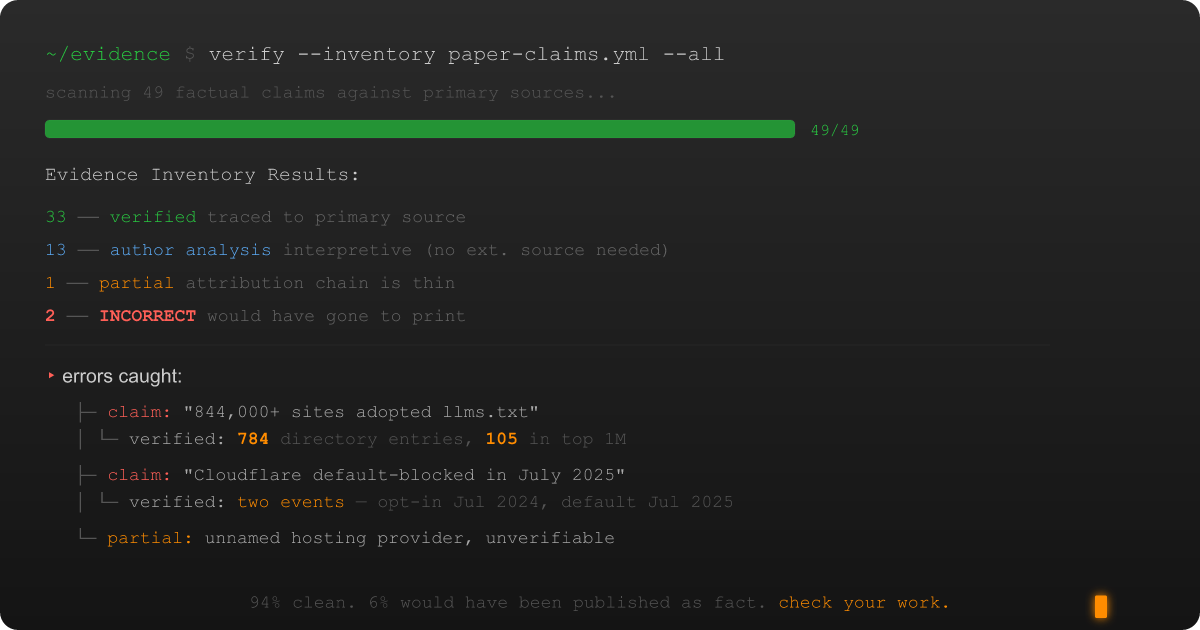

The final tally on those 49 claims: 33 verified cleanly. 13 were author analysis—my own interpretive claims that don't need external verification. 1 came back partially verified.

And 2 came back incorrect.

Two out of 49 doesn't sound catastrophic until you realize both were in the paper's most visible section, and one of them was the marquee adoption statistic that's been circulating through the community unchallenged.

Error #1: The 844,000 Sites That Weren't

The paper's outline cited a widely circulated figure: "844,000+ websites have implemented some form of llms.txt." I'd seen this number in blog posts, LinkedIn threads, and conference talks. It felt plausible. The internet is big. Standards get adopted.

So I went looking for the primary source. The actual crawl data. The methodology. The researcher who counted these files and published the result.

I couldn't find one. Not because I didn't look hard enough, but because it apparently doesn't exist.

What I did find was... different. I wrote an entire spin-off blog post about it, because the gap between the claimed figure and reality deserved its own space. The short version: community directories list hundreds of sites. The Majestic Million analysis found 105. Not 844,000. A hundred and five.

I care about this standard. I'm building tools for it. That's why the bad data stung—we need accurate numbers to build a credible case, and I was about to cement an indefensible one into a research paper.

The number was wrong, and I only caught it because the evidence inventory forced me to verify it instead of just citing it.

Error #2: The Cloudflare Timeline That Was Actually Two Events

The paper's outline said: "Cloudflare began blocking all AI crawlers by default on new domains in July 2025 (AIndependence Day)."

Close, but misleadingly compressed. The verification revealed two distinct events a year apart:

July 3, 2024: Cloudflare published the "Declare your AIndependence" blog post, introducing opt-in one-click AI bot blocking. Any customer could toggle it on. At the time, AI bots were accessing roughly 39% of top-million Cloudflare properties, but only 2.98% had bothered to block them.

July 1, 2025: Cloudflare escalated to "Content Independence Day" and made AI bot blocking the default for newly created domains. No opt-in required.

My original outline smooshed both events into a single sentence, implying Cloudflare went from "no blocking" to "default blocking" overnight. The actual story—an escalation from opt-in to default over twelve months—is more accurate and more interesting. It shows a progression, not a switch flip. The corrected timeline actually strengthens the paper's argument about the infrastructure paradox tightening over time.

(And yes, I caught this error for the paper but managed to publish it on this very blog. My first post confidently states Cloudflare started default-blocking under the "AIndependence Day" banner. I am bragging about catching an error I already shipped. The turtles go all the way down.)

Without the evidence inventory forcing me to check against Cloudflare's primary documentation, the paper would have presented a misleading compression of two separate events as a single fact. Not maliciously. Just carelessly. Which, when it's your credibility on the line, amounts to the same thing.

Error #3: The Hosting Provider Who Shall Remain Unnameable

This one was subtler. Yoast's analysis cited a hosting provider managing thousands of sites that had observed zero GPTBot activity on llms.txt endpoints. The original claim said "20,000 sites," which was already interesting data. But when I tried to independently verify the hosting provider's identity, the trail went cold.

Yoast's article didn't name them. Web research couldn't identify them. The number shifted between sources. The claim was attributed (Yoast reported it) but the attribution chain was thin: a named source citing an unnamed source reporting an unverifiable number.

For the analytical paper, which needs to survive peer review and earn the trust of a skeptical audience, this claim got cut entirely. The paper relies on Yoast's own verified finding (that GPTBot, ClaudeBot, and Google AI crawlers don't routinely request llms.txt files) instead. Same directional conclusion, stronger epistemic foundation.

When covering the inference gap for the blog posts earlier in this series, I kept the claim because the blog has a slightly different evidence standard, but I made sure to add an explicit transparency caveat: "though the provider wasn't identified, so the attribution chain is thin." Diplomatic but honest.

Two different publication formats. Two different decisions on the same claim. Both documented in the evidence inventory so future-me doesn't have to reconstruct the reasoning.

Why This Works (and Why I Think You Should Do It)

The evidence inventory caught three errors across 49 claims. A 6% error rate. That's lower than I expected, honestly, and it means 94% of the paper's factual foundation is solid. But those three errors weren't trivial: one was the paper's most visible statistic, one would have misrepresented a major company's timeline, and one would have cited a source that can't be independently verified. Any one of them, published uncorrected, could have undermined the entire paper's credibility.

Here's the thing: I didn't set out to debunk my own research. I set out to organize it. The evidence inventory started as a project management tool—a way to track which claims I'd sourced and which still needed work. The fact-checking benefit was a side effect. But what a side effect.

The process works because it changes the question. Without an inventory, the question is: "Do I have a source for this?" Low bar. With an inventory, the question becomes: "Can I independently verify this, and does the verification match what I thought I knew?" That bar separates "I read this somewhere" from "I verified this myself."

This isn't just an academic exercise. Blog posts cite statistics. Conference talks reference adoption numbers. Internal reports inform real budget decisions. Anywhere you're writing something that someone else might cite, act on, or use to justify a decision—that's where the evidence inventory earns its keep.

The Practical Version

If the idea of a 49-row evidence inventory sounds exhausting, here's the minimal viable version:

Before you write: Extract every factual claim from your outline. Just the facts. Not opinions, not analysis—the claims that assert something about reality.

For each claim, ask three questions: Where did I get this? Can I verify it from a primary source? Does the primary source actually say what I think it says?

Track the answers. A spreadsheet. A Markdown table. Even a sticky note with "✅ / 🔄 / ❌" on it. The format doesn't matter. What matters is that you wrote down the question and the answer instead of assuming the claim was correct because you'd seen it repeated often enough.

Pay special attention to numbers. Statistics are where the hype cycle lives. Adoption figures, market share percentages, growth rates—these are the claims most likely to have been optimistically extrapolated, selectively sampled, or outright fabricated by someone upstream in the citation chain. Every number should have a methodology you can inspect. If it doesn't, that's not a source. That's a rumor with a hyperlink.

Document your editorial decisions. When a claim turns out to be partially verified or unverifiable, write down what you decided to do about it and why. Future-you will thank present-you, and anyone who questions your methodology will find a documented answer instead of a shrug.

The Meta-Irony

Yes, I'm aware that writing a blog post about the importance of evidence inventories is itself a documentation-first compulsion taken to its logical extreme. I documented my documentation process and then published documentation about the documentation of the documentation process. There are probably turtles under here somewhere.

But those three errors would have gone to print. Other researchers would have cited them. The 844K figure would have continued circulating through AI discourse with my paper as another link in the chain. The Cloudflare timeline would have been wrong in a way that distorts how quickly the infrastructure landscape is tightening. And the unnamed hosting provider would have been presented as verified data in a document that claims to be rigorous.

Docs first isn't just a workflow. It's epistemic hygiene. Check your facts before you publish them. Check them especially when they confirm what you already believe. And when they come back wrong, publish the corrections with the same enthusiasm you'd publish the findings.

The corrections are the findings. That's the whole point.