Context Windows Are a Lie (And Haiku Protocol Is My Coping Mechanism)

LLM vendors would like you to know that their latest model supports a 128,000-token context window. Some of them say 200,000. One of them, and I won't name names but their logo is a little sunset, says a million. A million tokens. That's approximately four copies of War and Peace, which is appropriate because trying to get useful work done at the far end of a million-token window is its own kind of Russian tragedy.

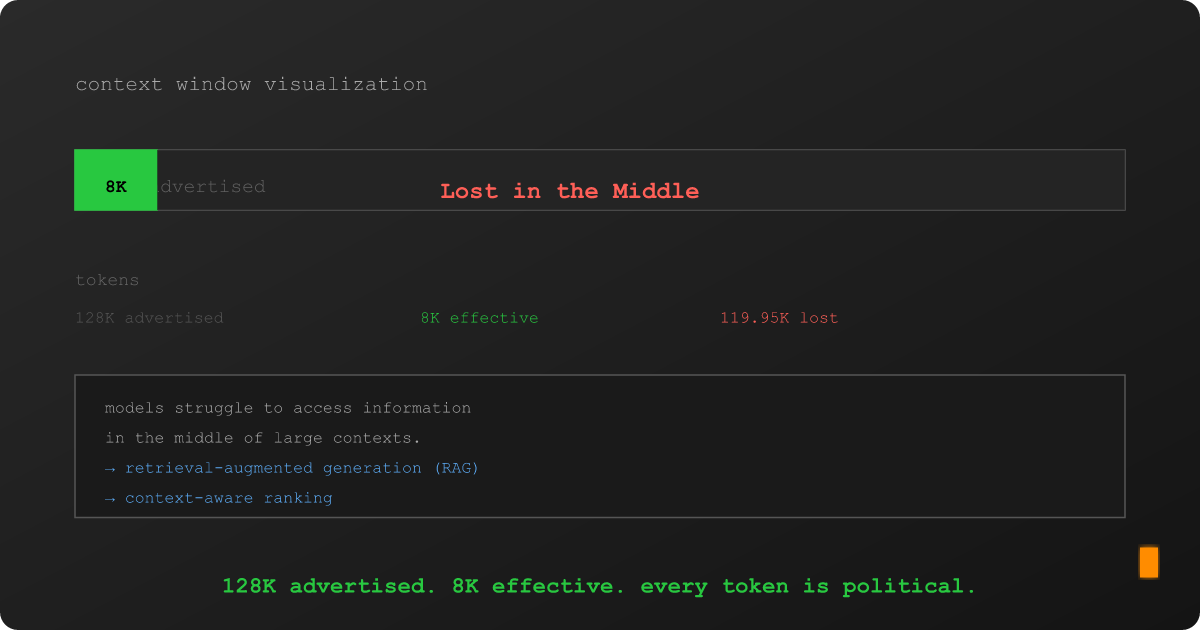

Here's what the marketing materials don't mention: the effective context window, the portion where the model actually pays reliable attention to what you put there, is dramatically smaller. Research from Stanford, Berkeley, and others has converged on a finding that would be funny if it weren't costing people real money: models struggle with information placed in the middle of long contexts. They're great at the beginning. They're decent at the end. The middle? The middle is where facts go to die quietly, unnoticed, like a footnote in a terms of service agreement.

This is the "Lost in the Middle" problem, and if you're building anything that retrieves information and feeds it to a language model (which, in 2026, is approximately everyone) it means the number on the tin is a fantasy. Your 128K window is functionally an 8K window with 120K tokens of expensive padding.

I know this because I ran the experiment. Accidentally. Three times.

The Token Budget Problem (Or: How I Learned to Count)

I've been building FractalRecall, a research project exploring what happens when you stop treating metadata as an afterthought and start treating it as structural DNA that shapes how text gets embedded. The thesis is straightforward: if you're going to shove a chunk of text through an embedding model, maybe also mention what kind of text it is. Domain, entity type, canonical status, temporal era. The stuff that's usually stored in a metadata field that retrieval systems politely ignore until someone needs to filter by it.

So I did that. In my D-22 experiment, I prepended a single natural-language sentence to every chunk before embedding it. Something like:

"Domain: faction. Entity: Iron-Banes Alliance. Canon: true."

Twenty-four tokens. One sentence. The kind of thing you could fit in a tweet, if tweets still existed and if the platform hadn't been renamed to a math variable.

The result? Retrieval quality (NDCG@10) improved by 16.5%. Recall jumped 27.3%. Twenty-four tokens did more for retrieval than the other 974 tokens in most chunks.

And here's the part I didn't expect: 43% of my chunks overflowed the token limit because of those extra 24 tokens. Nearly half the corpus got dropped. And retrieval still improved. Let that sink in. I lost almost half my data and things got better. That's not an endorsement of data loss; it's an indictment of how much noise was in those 974 tokens to begin with.

Every Token Is a Political Decision

This is where the context window lie becomes personal. When your embedding model has a 1,024-token budget per chunk and your retrieval pipeline feeds 10 chunks into a prompt, you're not working with 128K tokens. You're working with maybe 10,000, and every single one of them is a decision.

Twenty-four tokens for metadata means twenty-four fewer tokens for content. Scale that to eight layers of context (corpus identity, domain classification, entity type, authority status, temporal period, relationships, section heading, and the chunk's position in the document) and suddenly your "enrichment" is eating 60–80 tokens per chunk. On a 600-token budget, that's 13% of your real estate gone before you've said a single thing about what the chunk actually contains.

This is the tension nobody talks about in RAG tutorials. Everyone says "add context to your retrieval." Nobody mentions that context has a cost, and that cost is denominated in the same currency as your content. There is no free lunch. There is no free token. Every prefix you add is a sentence you can't keep.

I spent three experiment notebooks (D-21, D-22, D-23) grappling with this tradeoff, and I can report that the optimal answer is "it depends." Which I understand is unsatisfying, and I apologize, but I am a documentation-first developer and I am constitutionally incapable of oversimplifying a nuanced finding for the sake of a cleaner narrative. The data says it depends. The data is right. I just work here.

The Bigger Lie: Context Windows at Inference Time

But here's the thing: the token budget problem in embeddings is actually a small version of a much larger problem. The same squeeze happens at inference time, except the stakes are higher and the failure mode is less "lower NDCG" and more "the model starts hallucinating because it forgot what you told it six paragraphs ago."

When you ask an LLM a question and your pipeline retrieves relevant context, that context goes into the prompt. The more context, the better, in theory. In practice, there's a point of diminishing returns that arrives much sooner than the model's advertised context window, and it arrives silently. The model doesn't say "I'm losing track of your earlier context." It just starts making things up with the same confidence it uses when it's right. Hallucination doesn't come with a warning label. It comes with citations that look plausible and facts that are almost correct and an authoritative tone that would make a Wikipedia editor proud.

So the real question isn't "how much context can you fit?" It's "how much context should you fit, and how do you make every token count?"

Which brings me to Haiku Protocol, and the coping mechanism I mentioned in the title.

Semantic Minification (Or: Prose Compression for People Who Count Tokens)

Haiku Protocol started as a thought experiment: what if you could compress documentation without losing meaning? Not summarization, because summaries lose detail. Not truncation, because truncation loses endings. Compression. The textual equivalent of Gzip, but for semantics.

The idea is a Controlled Natural Language, a systematic transformation that takes verbose, human-friendly prose and produces dense, machine-optimized strings that preserve the same information in fewer tokens. Think of it as semantic minification. The way a JavaScript minifier turns function calculateTotalPrice into function a without losing functionality, Haiku Protocol turns "The Iron-Banes Alliance is a faction of armored warriors who have forged a pact with the Iron Covenant, granting them dominion over metalworking and siege warfare" into something like:

FACTION:Iron-Banes|PACT:Iron-Covenant|DOMAIN:metalwork,siege|CLASS:armored-warrior

Same information. Fraction of the tokens. The model doesn't need flowery prose to understand the relationships; it needs the structure. And if you're paying per token (which you are, whether you're using an API or burning GPU cycles), every word that doesn't carry meaning is money and attention you're setting on fire.

If your 24-token metadata prefix can improve retrieval by 16%, what happens when you compress the entire chunk to preserve more content per token? What if, instead of choosing between enrichment and content, you compress the content to make room for the enrichment? You're not losing information; you're encoding it more efficiently.

I haven't proven this works yet. Haiku Protocol is earlier in its lifecycle than FractalRecall. But the thesis is grounded in the same uncomfortable truth: tokens are expensive, context windows are smaller than advertised, and the AI industry's answer ("just make the window bigger") is a hardware solution to an information architecture problem.

What These Two Projects Have in Common

FractalRecall and Haiku Protocol approach the same problem from opposite directions:

-

FractalRecall asks: What's the most valuable information I can add to each chunk? It enriches content with metadata, gambling that 24 tokens of the right context outperform 24 tokens of the wrong content.

-

Haiku Protocol asks: How do I make room for more signal per chunk? It compresses content to reclaim token budget, gambling that dense-but-complete representations outperform verbose-but-truncated ones.

Together, they form a thesis: the quality of tokens matters more than the quantity of tokens. A 128K context window full of noise is worse than a 4K window full of signal. An embedding with 24 tokens of metadata outperforms an embedding with nothing but raw text. A compressed chunk that fits entirely in the budget beats a verbose chunk that overflows and gets dropped.

This is not a novel observation. Information theory has been saying this since Shannon. But the AI industry keeps building bigger windows instead of better content, and someone needs to run the experiments that ask whether that's actually working.

I'm someone. These are the experiments.

What Comes Next

FractalRecall has three completed experiment notebooks (D-21 through D-23) and a fourth in planning. The results so far: baseline established, single-layer enrichment shows statistically significant improvement, and multi-layer enrichment taught me that your evaluation pipeline can produce statistically significant results even when every single metric is wrong. But that's a story for another post.

Haiku Protocol has a working parser, a conformance level system, and a growing test suite. The compression experiments haven't started yet, but the infrastructure is in place.

Both projects share the same research question: in a world where every AI vendor is racing to build a bigger context window, is anyone stopping to ask whether we're filling those windows with the right things?

I am. And yes, I wrote the documentation first.

This post is the first in a series covering FractalRecall and Haiku Protocol. Next up: the D-22 overflow story, what happens when you improve retrieval by accidentally deleting 43% of your data.