Embedding Models Don't Read Your Metadata (But They Should)

Here's a sentence your embedding model understands perfectly well:

"This is a canonical faction document from the post-Glitch era describing cultural practices and political structure."

And here's functionally identical information that your embedding model treats as random noise:

canon: true

domain: faction

era: post-glitch

topics: [culture, politics]

Same facts. Same document. Different embedding behavior. The YAML blob gets processed as four disconnected tokens with no semantic weight. The natural language sentence gets encoded as a rich set of contextual signals that tell the model what this document is, what it's about, and how it relates to the kind of questions someone might ask.

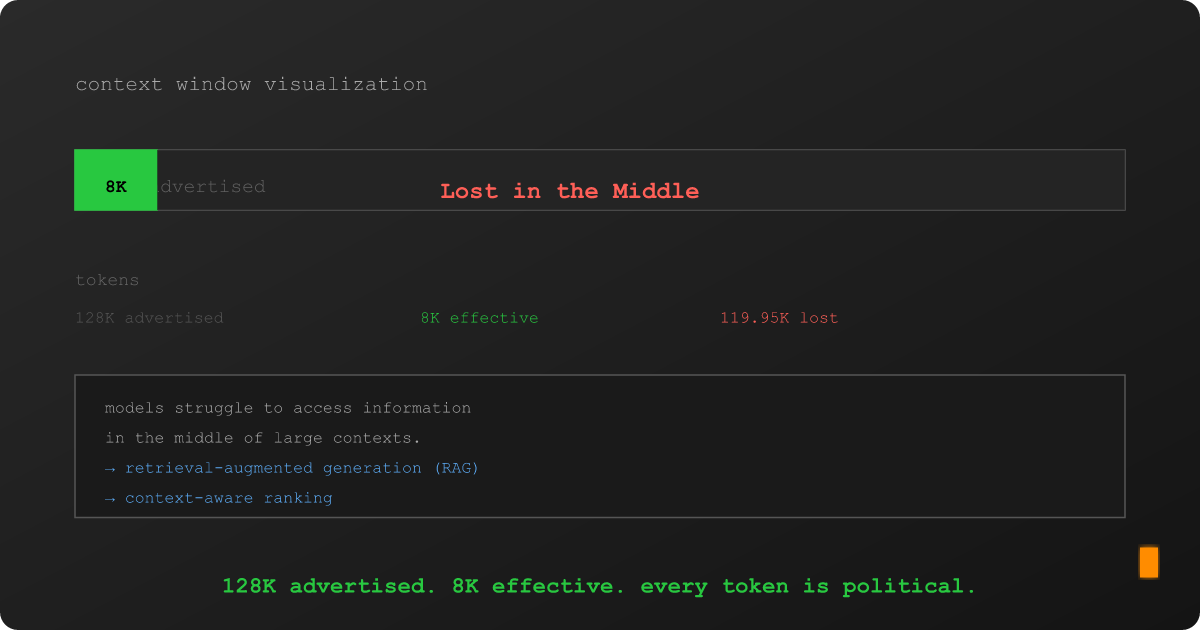

The gap between the metadata your system knows and the context your embeddings encode is the single biggest free improvement sitting in most RAG pipelines. Almost nobody exploits it.