78.8% of My Validator Is Made Up (And That's the Point)

I recently did something that most software developers would consider either admirably honest or clinically inadvisable: I audited my own tool against the specification it claims to implement, wrote down the results in excruciating detail, and published them.

The tool is DocStratum, a documentation quality platform for llms.txt files. The project started with a thesis that most people in the AI tooling space either haven't considered or don't want to hear: a Technical Writer with strong Information Architecture skills can outperform a sophisticated RAG pipeline by simply writing better source material. Structure is a feature. DocStratum exists to prove it.

At its core, DocStratum is a validation framework — think ESLint, but for a Markdown standard defined by a blog post instead of a formal grammar. It checks your llms.txt file across five validation levels: basic parseability (L0), structural compliance (L1), content quality (L2), best practices (L3), and a full extended-quality tier (L4). It categorizes findings across 38 diagnostic codes using three severity levels (Error, Warning, Info). It detects anti-patterns — 22 of them, with names like "The Ghost File," "The Monolith Monster," and "The Preference Trap." It has opinions.

Those opinions, it turns out, are almost entirely our own invention. (Good.)

A Note on "We"

You'll notice this post says "we" a lot. That's not a royal we, and it's not a startup founder trying to sound bigger than a one-person operation. It's me and an assistant, Claude. Specifically, I write the research, design the architecture, make the editorial calls, and generate roughly 300% more tangential ideas than any single project can absorb. Claude helps me organize, audit, edit, and--most critically--finish things before my ADHD has me chasing a fourth concurrent rabbit hole.

The collaboration is genuine. The opinions in DocStratum are mine. The structured process that turned those opinions into a publishable audit with 52 classified items instead of a sprawling notes file with peppered with "TODO: organize this eventually" scrawled throughout. That's the partnership. I provide the chaos and the conviction. Claude provides the throughput and the gentle reminder that I was supposed to be writing about validation tiers, not redesigning the glossary (again).

I mention this because I think that hiding AI involvement while writing about AI infrastructure would be, at a minimum, ironic. And I'm about to use up my irony budget elsewhere in this post.

The Audit

The audit was straightforward in concept and slightly unhinged in execution. We took every validation criterion, every canonical section definition, and every formal grammar rule in DocStratum's standards library (52 items in total, drawn from 149 ratified ASoT standard files across 27 research documents) and classified each one into exactly one of three categories:

Spec-Compliant (SC): The behavior directly follows from the llms.txt specification AND matches the reference parser's actual behavior. The reference parser is miniparse.py in the AnswerDotAI/llms-txt repository. If the parser checks it, it's spec-compliant.

Spec-Implied (SI): A reasonable inference the spec assumes but doesn't explicitly state. Not contradicted by the reference parser, but not actively checked by it either. Things like "the file should be valid UTF-8," which the spec doesn't mention but which is kind of implied by "it's a Markdown file" in the same way that "the restaurant should have a floor" is implied by "it's a building."

DocStratum Extension (EXT): Goes beyond what the spec defines or the parser implements. Our value-add. Our opinions. Our educated guesses about what makes an llms.txt file actually useful to an AI system, as opposed to merely parseable by one.

Here's what we found.

The Numbers

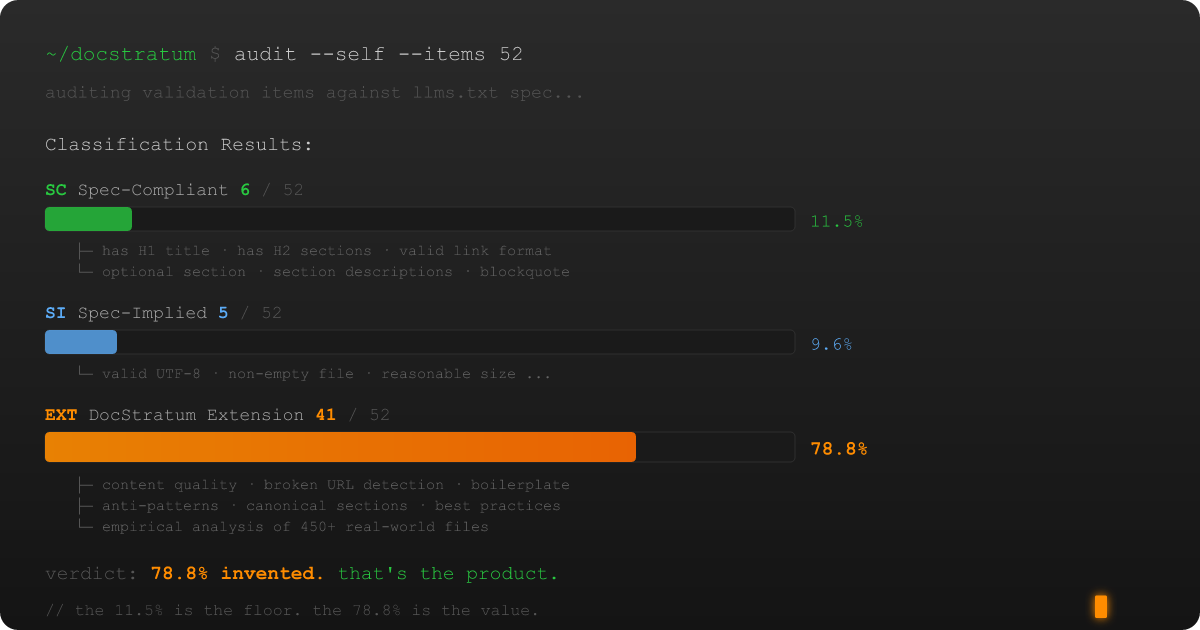

Out of 52 audited items:

- 6 items (11.5%) are Spec-Compliant

- 5 items (9.6%) are Spec-Implied

- 41 items (78.8%) are DocStratum Extensions

Put differently: nearly four out of five things DocStratum checks have nothing to do with the llms.txt specification. We made them up. "Made them up" in the same way that a building inspector "makes up" the requirement that load-bearing walls should bear loads—but still. Ours, not the spec's.

The 52 items break down by level like this: L0 (Parseable) contributes 5 items with 0 SC, 3 SI, and 2 EXT. L1 (Structural) contributes 6 items with 4 SC, 1 SI, and 1 EXT. L2 (Content Quality) contributes 7 items — all EXT. L3 (Best Practices) contributes 9 items — all EXT. L4 (DocStratum Extended) contributes 8 items — all EXT. The remaining 17 items come from the 12 canonical section name standards and 5 ABNF grammar extension points.

The pattern is stark: L1 is the only level where the spec has meaningful coverage. Everything above and below it is either pre-specification assumptions (L0) or DocStratum's quality framework (L2–L4).

If you're wondering what the 6 spec-compliant items actually are, here's the complete list. It won't take long:

- H1 Title Present. The spec says files should start with an H1. The parser extracts it with

^# (.+)$. Both agree. - Single H1 Only. The spec describes one H1 as the document title. The parser captures only the first match. Multiple H1s would be structurally ambiguous.

- H2 Section Structure. The spec requires sections delimited by H2 headers. The parser splits on

^##\s*(.*?$). At least one H2 is required by both. - Link Format Compliance. The spec defines the link format as

- [Title](URL): description. The parser enforces it with a regex that I will not reproduce here because some things should remain between a developer and their regular expressions. - "Optional" Section Recognition. The spec names "Optional" as a semantically special section. The parser checks

k != 'Optional'to exclude it by default. Case-sensitive. Exact match. No aliases. - Permissive Line Endings. Both the grammar and the parser accept CRLF and LF.

That's it. Those six items are the entirety of what "spec-compliant llms.txt validation" looks like. Does it have an H1? Does it have at least one H2? Are the links formatted correctly? Is there an Optional section? Cool. You pass. The reference parser is approximately 20 lines of Python regex, and it validates roughly as much as you'd expect from 20 lines of Python regex, which is to say: the bare structural minimum.

The Gap Between "Parseable" and "Useful"

So why build a tool that's 78.8% opinions?

The llms.txt spec is deliberately minimal, and there's wisdom in that. Jeremy Howard designed it that way. The canonical parser is 20 lines of regex-based string processing—clean, portable, unopinionated. It defines a file format, not a quality framework. It tells you what an llms.txt file looks like. It doesn't try to tell you whether that file is any good, because quality criteria are contextual and the spec wisely avoids baking in assumptions that might not age well.

And "good" matters. An llms.txt file exists for one purpose: to help AI systems understand your documentation. A file that technically parses but contains broken links, empty sections, placeholder content, duplicate headings, and auto-generated descriptions that all say "Learn about X" is not doing that job. It's the documentation equivalent of a restaurant menu that lists every dish as "food" with no further detail. Technically accurate. Functionally useless.

DocStratum's 41 extensions are everything between "it parses" and "it actually helps." Content quality checks that flag broken URLs, empty sections, and boilerplate descriptions. Best-practice patterns derived from detailed analysis of 18 real-world implementations across 6 categories and 11 raw specimens collected for byte-level conformance testing, plus survey data from community directories listing hundreds of llms.txt deployments. Anti-pattern detection that catches files providing zero or negative value to LLM agents — and I mean specific, named anti-patterns, not just vague warnings. "The Ghost File" (empty or whitespace-only). "The Structure Chaos" (unparseable Markdown). "The Monolith Monster" (files exceeding 100,000 tokens — good luck fitting that in a context window). "The Preference Trap" (SEO-style gaming that exploits the trust LLMs place in llms.txt files). Twenty-two patterns total across four severity categories.

And then there's the canonical vocabulary: a standardized set of 11 section names optimized for how AI systems navigate documentation — Master Index, LLM Instructions, Getting Started, Core Concepts, API Reference, Examples, Configuration, Advanced Topics, Troubleshooting, FAQ, and Optional. Of those 11, exactly one comes from the spec (Optional). The other 10 are ours, drawn from empirical patterns in high-quality specimens and optimized for progressive disclosure to LLM agents.

None of this is in the spec. All of it matters if you care about whether your llms.txt file is worth the bytes it occupies.

What the Research Actually Found

Before we built the validation framework, we did the research. This isn't a point I want to rush past, because the research is what separates DocStratum's opinions from arbitrary preferences.

The specification deep-dive (v0.0.1) produced a formal ABNF grammar for the llms.txt format — the spec doesn't provide one. That grammar revealed something interesting: the spec's informal structure description actually maps to two distinct document types. Type 1 (Index) files follow the grammar faithfully: single H1, optional blockquote, H2 sections with curated link entries. These are the "card catalog" files — navigational entry points for AI agents. Type 2 (Full) files embed complete documentation content inline, with multiple heading levels, extensive prose, and fenced code blocks. Anthropic's claude-llms-full.txt (25 MB, 956,573 lines) is a Type 2. The spec doesn't distinguish between them. DocStratum does, because a validator that treats a 1.1 KB navigation index and a 25 MB documentation dump as the same structural entity is a validator that's lying to you.

The correlation analysis from the empirical research produced some genuinely useful results. The presence of code examples turned out to be the strongest single predictor of overall documentation quality (r ≈ 0.65). Link description quality correlated at r ≈ 0.45. These aren't arbitrary thresholds — they're the empirical basis for why DocStratum's content quality checks (L2) weight certain features the way they do.

The gap analysis identified 8 areas the spec intentionally leaves undefined: maximum file size, required metadata, versioning, validation schema, caching recommendations, multi-language support, concept definitions, and example Q&A pairs. Each gap is an opportunity for tooling to fill. DocStratum's extensions map directly to these gaps.

Why We Published the Audit

The obvious objection: "If 78.8% of your tool is extensions beyond the spec, how can you call it a spec validator? Isn't this just your opinions dressed up in technical authority?"

Yes. Partially. And that's exactly why we published the audit.

Every validation criterion in DocStratum now carries a spec_origin classification. When DocStratum reports that your file fails a check, the report tells you whether that check is something the spec requires (SC), something the spec implies (SI), or something DocStratum recommends (EXT). You can see the difference. You can filter by it. If you only care about spec compliance, you can run DocStratum in a mode that limits checks to SC and SI criteria, which is effectively levels L0 and L1, minus one criterion.

The extension labeling makes DocStratum more credible, not less. A tool that silently mixes spec requirements with opinionated recommendations is a tool you can't trust, because you never know which findings reflect the standard and which reflect the tool author's aesthetic preferences about Markdown structure. (I have strong aesthetic preferences about Markdown structure. They are well-documented. They are not the specification.)

By being transparent about the classification, we're giving you the information you need to make an informed decision about which validation findings to act on. Spec-compliant findings are non-negotiable: if your H1 is missing, your file isn't llms.txt. Extension findings are recommendations: if your section names don't match DocStratum's canonical vocabulary, your file still parses fine, but it might not be organized in the way that we've found (empirically, across real-world specimens) produces the best results for AI consumption.

What the Audit Taught Us About Specs and Tooling

The deeper takeaway from this exercise isn't about DocStratum specifically. It's about what happens when a specification is intentionally minimal and tooling has to fill the gaps.

The llms.txt spec is three paragraphs of format description and a 20-line Python script. That's not a criticism—it might be the smartest design decision in the whole ecosystem. Minimal specs are easy to implement, hard to get wrong, and they let the ecosystem experiment organically instead of locking in decisions prematurely. The HTML spec took decades to stabilize. RSS spawned a format war. Jeremy Howard looked at that history and published something a competent developer could implement during a lunch break.

But minimal specs create an opportunity, and opportunities get seized. In the case of llms.txt, the opportunity is quality. The spec tells you how to structure the file. It deliberately leaves room for the ecosystem to figure out what "good" looks like: whether the content is well-organized, whether the links work, whether the sections are arranged in a way that an AI system can navigate effectively, whether the file is even encoding-valid. Somebody gets to explore that space and propose answers. For llms.txt, we're one of the somebodies, and we consider that a privilege rather than a complaint.

The 78.8% isn't a flaw in DocStratum or a gap in the spec. It's the natural consequence of a minimal format definition meeting real-world quality requirements. The spec defines the floor. DocStratum builds the rest of the building. The audit ensures you know which parts are which, so you can decide for yourself how many floors you need.

Where This Is Going

DocStratum started as a single-file validator. It's becoming something bigger.

The ecosystem pivot — documented in our v0.0.7 specification — redefines DocStratum as an ecosystem-level documentation quality platform. Instead of just validating one llms.txt file, it will validate an entire project's AI-documentation surface: the llms.txt navigation index, the llms-full.txt content dump, individual Markdown pages linked from the index, and instruction files. Not just whether each file is valid in isolation, but whether the relationships between them make sense. Does the index reference pages that exist? Does the aggregate actually contain the content it claims to? Are cross-references reciprocal?

The single-file validator doesn't get replaced. It becomes a component within the ecosystem validator. The 52 audited criteria still apply per-file. But now there's a layer above them that answers a question no other tool in the llms.txt space currently asks: "Is this project's AI-documentation ecosystem coherent?"

Every tool we've surveyed (75+ validators and generators) operates on a single file. Nobody validates the ecosystem. That's the gap we're building into.

The Irony, Which I Promise I Noticed

I built a validation tool for a standard defined by a blog post, audited my own tool's compliance with that blog post, and discovered that most of my tool exceeds the blog post's requirements. (Documentation-first developer problems.)

I have written more documentation about the llms.txt standard than the llms.txt standard contains. The audit document alone is longer than the spec. The evidence inventory I built to fact-check the paper is longer than the spec. The 149 ASoT standards files I've ratified for this project could probably, stacked end to end, stretch from the spec to the nearest competing standard and back. At this point, my commit messages about the spec might be longer than the spec.

This is either a testament to the spec's elegant minimalism or a sign that I need a hobby that doesn't involve Markdown. (I have one. It's a text-based dungeon crawler. It's also written in documentation-first C#. I may be beyond help.)

So Is DocStratum Useful or Not?

Extremely yes, and I'm biased, and both of those things are true simultaneously.

If you publish an llms.txt file and you want to know whether it's doing its job—not just whether it parses, but whether it's helping AI systems understand your documentation—DocStratum's 41 extensions are the most thorough quality framework we've seen for the format. They're based on empirical analysis, tested against real-world specimens, transparently labeled so you can see which recommendations are ours versus the spec's, and backed by 27 research documents that took us from "what does this spec actually say?" to "here are 22 named anti-patterns that make llms.txt files actively harmful."

The 78.8% is the product. The 11.5% is the floor. The audit is the receipt.

And if you're the kind of person who reads extension labeling audits for fun: there are 52 items to peruse, each with a rationale field. (I am constitutionally incapable of classifying something without explaining why.)

You're welcome.