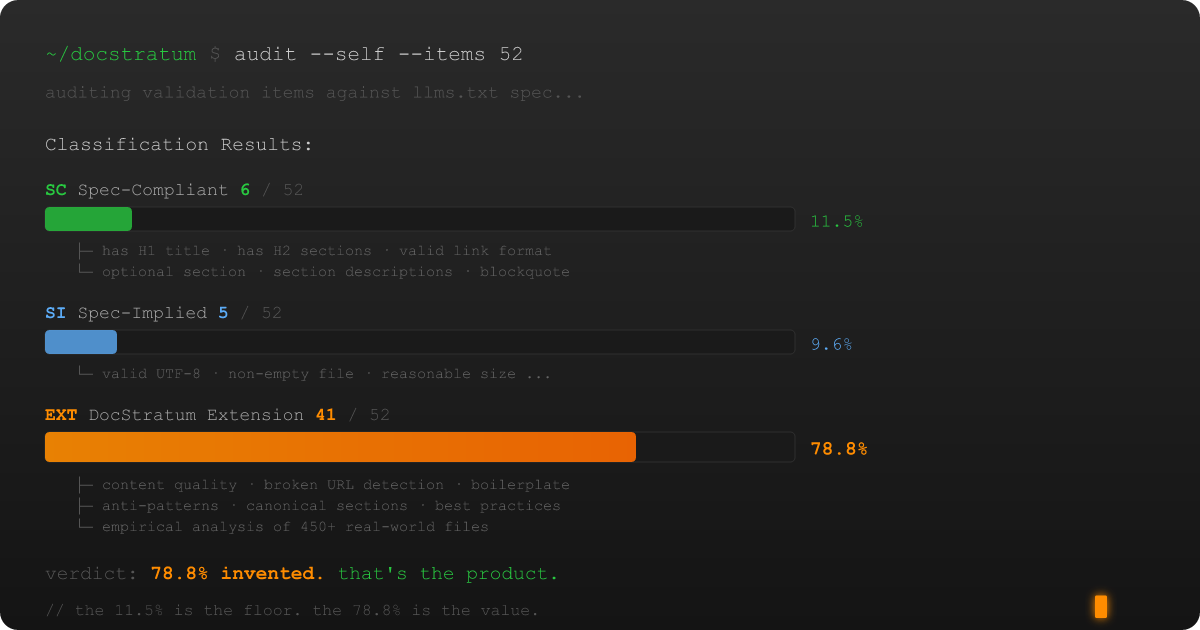

78.8% of My Validator Is Made Up (And That's the Point)

I recently did something that most software developers would consider either admirably honest or clinically inadvisable: I audited my own tool against the specification it claims to implement, wrote down the results in excruciating detail, and published them.

The tool is DocStratum, a documentation quality platform for llms.txt files. The project started with a thesis that most people in the AI tooling space either haven't considered or don't want to hear: a Technical Writer with strong Information Architecture skills can outperform a sophisticated RAG pipeline by simply writing better source material. Structure is a feature. DocStratum exists to prove it.

At its core, DocStratum is a validation framework — think ESLint, but for a Markdown standard defined by a blog post instead of a formal grammar. It checks your llms.txt file across five validation levels: basic parseability (L0), structural compliance (L1), content quality (L2), best practices (L3), and a full extended-quality tier (L4). It categorizes findings across 38 diagnostic codes using three severity levels (Error, Warning, Info). It detects anti-patterns — 22 of them, with names like "The Ghost File," "The Monolith Monster," and "The Preference Trap." It has opinions.

Those opinions, it turns out, are almost entirely our own invention. (Good.)