Context Windows Are a Lie (And Haiku Protocol Is My Coping Mechanism)

LLM vendors would like you to know that their latest model supports a 128,000-token context window. Some of them say 200,000. One of them, and I won't name names but their logo is a little sunset, says a million. A million tokens. That's approximately four copies of War and Peace, which is appropriate because trying to get useful work done at the far end of a million-token window is its own kind of Russian tragedy.

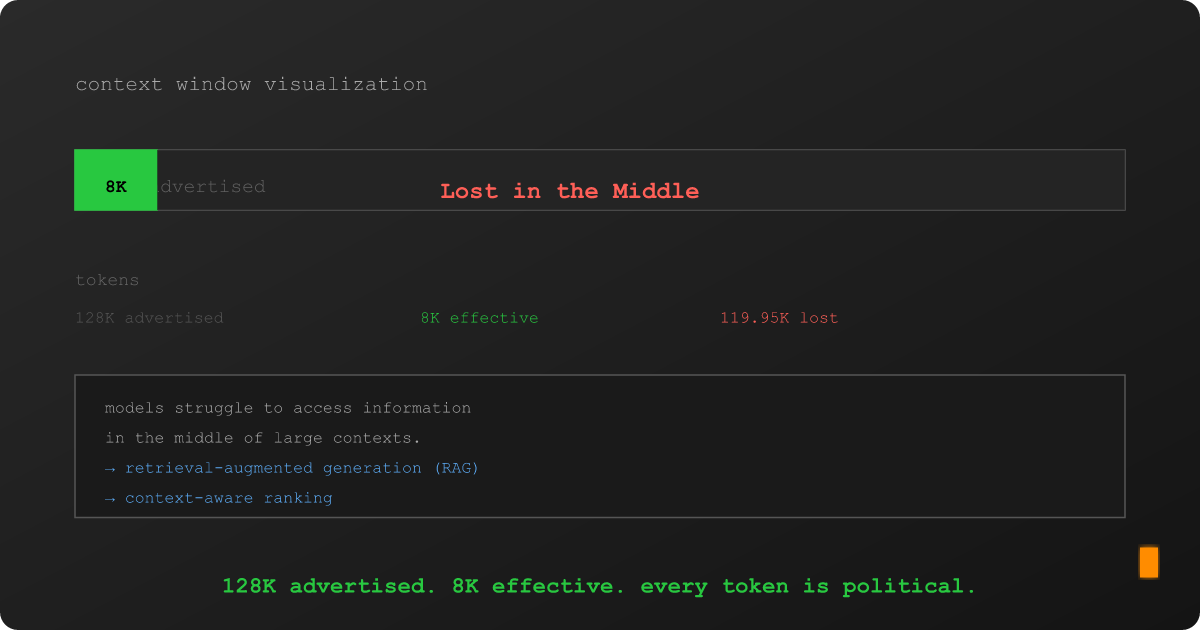

Here's what the marketing materials don't mention: the effective context window, the portion where the model actually pays reliable attention to what you put there, is dramatically smaller. Research from Stanford, Berkeley, and others has converged on a finding that would be funny if it weren't costing people real money: models struggle with information placed in the middle of long contexts. They're great at the beginning. They're decent at the end. The middle? The middle is where facts go to die quietly, unnoticed, like a footnote in a terms of service agreement.

This is the "Lost in the Middle" problem, and if you're building anything that retrieves information and feeds it to a language model (which, in 2026, is approximately everyone) it means the number on the tin is a fantasy. Your 128K window is functionally an 8K window with 120K tokens of expensive padding.

I know this because I ran the experiment. Accidentally. Three times.