I Write the Docs Before the Code, and Yes, I Know That's Weird

I have a confession to make. When I start a new project, any project, doesn't matter what it is, the first thing I do is open a Markdown file and start writing documentation for something that doesn't exist yet.

Not code. Not a prototype. Not even a to-do list. Documentation.

I realize this makes me sound like the kind of person who reads the terms of service before clicking "I Agree." I promise I'm not. (I absolutely am.)

The Docs-First Affliction

There's a term for this in software circles: "documentation-driven development." It sounds very official and intentional, like something a thoughtful engineering team adopts after a retrospective. In my case, it's more of a compulsion. I'm a technical writer by trade, and somewhere along the way my brain got wired to believe that if something isn't documented, it doesn't really exist.

This means that before I write a class, I've already written the spec that describes the class. Before I write a test, I've already written the test plan that describes what the test should cover and why. Before I write this blog post, I wrote a content outline, a publication schedule, and a front matter template. It's documentation all the way down.

You might think this slows me down. You'd be right. You might also think it produces better outcomes when I eventually do write code. You'd also be right about that.

The tradeoff is that I start every project with a pile of Markdown files and zero functioning software, which looks, to the outside observer, like I've accomplished nothing. But those Markdown files? They've saved me from building the wrong thing more times than I can count. Turns out, it's really hard to write a clear product requirements spec for a bad idea. The spec fights back. It asks questions you didn't want to answer. It exposes assumptions you were hoping to sneak past yourself.

The Menagerie

This docs-first habit has produced, over time, a slightly eclectic collection of projects. Each one started as a Markdown file that got increasingly detailed until I had no choice but to actually build the thing.

Lexichord started as a frustrated manifesto. I was watching every AI writing tool on the market treat "style guide compliance" as a system prompt afterthought, just paste your brand voice into a text box and hope for the best. Anyone who's ever maintained a real enterprise style guide (with terminology databases, audience-specific complexity rules, document-type-specific formatting, and the seventeen exceptions to every rule) knows that's not how any of this works. So I wrote a spec for what an AI orchestration tool should look like for technical writers. Not a chatbot with a style guide bolted on, but a proper orchestration layer that coordinates multiple AI capabilities around a writer's actual workflow. Then I had to go build it, because the spec was too compelling to ignore.

FractalRecall started as a question scribbled in a notebook: "What if metadata isn't the thing you attach to embeddings after you create them, but the thing that should shape how you create them in the first place?" That question turned into a design spec. The design spec turned into a system that treats metadata as structural DNA, something that fundamentally informs how data gets vectorized, rather than just tagging it after the fact. Most RAG pipelines treat metadata as a post-retrieval filter. FractalRecall argues that's backwards. The jury is still out on whether I'm onto something or just being stubborn. (These are not mutually exclusive.)

DocStratum is exactly what it sounds like if you're the kind of person who names validation tools after geological layering processes. It validates llms.txt files against the spec (think ESLint, but for a Markdown standard that's defined by a blog post rather than a formal grammar). More on llms.txt in a moment, because that particular rabbit hole deserves its own section.

Rune & Rust is the odd one out and I love it for that. It's a text-based dungeon crawler written in C#, which in 2026 makes me either charmingly retro or deeply out of touch, depending on who you ask. No rendering engine, no sprite sheets, just state machines and narrative branching and the quiet satisfaction of modeling combat mechanics with pattern matching expressions. I built it partly because game logic is an incredible workout for design patterns, and partly because sometimes you just need to write code that stabs a goblin instead of parsing a compliance document.

And then there's LlmsTxtKit, which brings us to the rabbit hole.

How I Accidentally Became an llms.txt Researcher

It started, as these things often do, with a problem that seemed small.

I was poking around the AI tooling landscape (as one does when one has a docs-first compulsion and a GitHub account) and I noticed something odd. The llms.txt standard, proposed by Jeremy Howard in late 2024, was gaining real traction — community directories listing hundreds of implementations, big names like Anthropic and Cloudflare publishing files, a growing ecosystem of tools. The idea is elegant: put a Markdown file at /llms.txt on your site that gives AI systems a curated, structured summary of your content. Instead of forcing a language model to parse your entire HTML soup at inference time, you hand it the good stuff on a silver platter.

Great concept. I started looking for tools to work with it. Python module? Yep. JavaScript implementation? Sure. VitePress plugin, Docusaurus plugin, PHP library, Drupal module? All present. C#/.NET implementation? Crickets. Not a single one.

Now, I'm a C# developer. I like C#. It has nullable reference types and pattern matching and file-scoped namespaces and it doesn't make me manage my own memory like some kind of 1990s survivalist. So naturally, I decided to build the .NET llms.txt library myself. Docs first, obviously. I wrote the product requirements spec. I wrote the design spec. I started sketching out the architecture: parsing, fetching, validation, caching, context generation, the whole pipeline.

And that's when I tripped over the rabbit hole.

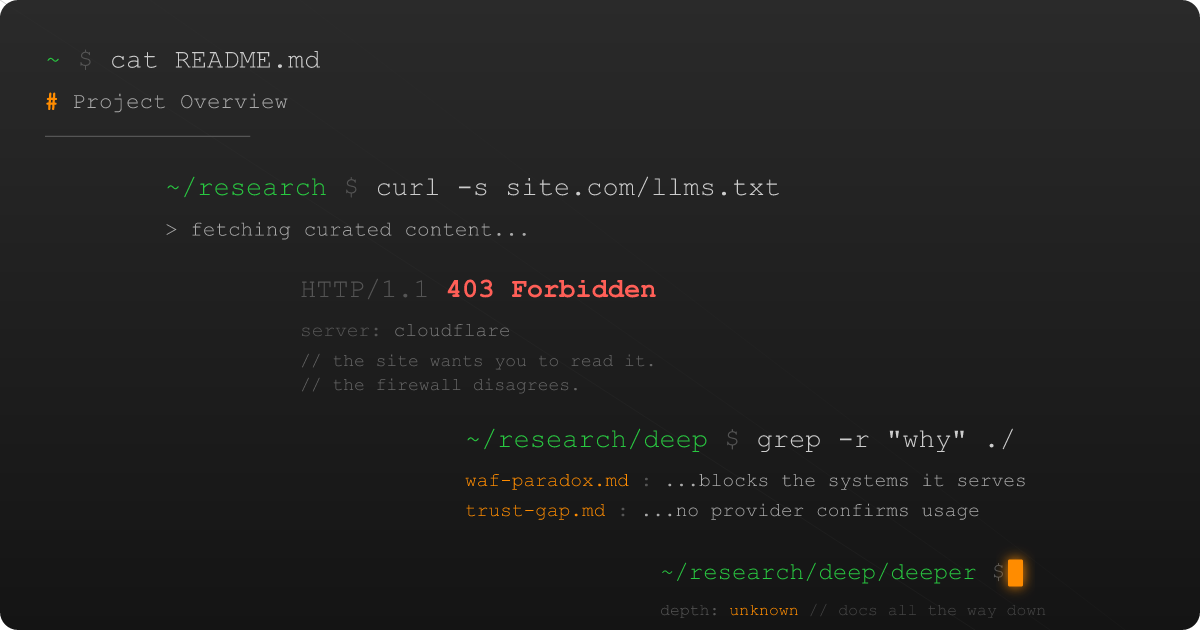

The Rabbit Hole Has a Firewall

See, when you build a tool that fetches llms.txt files from the open web, you quickly discover something that almost nobody is talking about: the web doesn't want to let you.

Cloudflare sits in front of roughly 20% of all public websites. In July 2025, they started blocking AI crawlers by default on new domains. They called it "AIndependence Day," which is the kind of branding that makes you wonder if there's a pun committee at Cloudflare and whether they're hiring.

The blocking happens because AI crawlers (programs that fetch web pages for language models) trip every bot-detection heuristic in the book. They don't execute JavaScript. They don't maintain cookies. They come from data center IP ranges. They use non-browser user agents. To a Web Application Firewall, they look indistinguishable from a DDoS attack with a liberal arts degree.

Here's the paradox: a site owner creates an llms.txt file specifically to help AI systems read their content. Their hosting infrastructure then blocks the AI systems that try to read it. The AI systems, unable to fetch the curated content, fall back to search APIs and get whatever Google decides to surface instead. Everyone involved is doing exactly what they're supposed to do. The system still doesn't work.

I found this fascinating. Not "mildly interesting" fascinating. "I need to write a 10,000-word analytical paper about this" fascinating.

Docs-First, Research Second, Code... Eventually

So that's what I'm doing. The llms.txt rabbit hole has expanded into a full research initiative spanning three interconnected projects:

"The llms.txt Access Paradox" is an analytical paper that documents this whole mess: the gap between how the standard was designed to work, how it actually works in practice, and why the infrastructure itself is the biggest obstacle. It covers the inference gap (no major AI provider has confirmed they use llms.txt at inference time), the WAF paradox (your own security tools block the very systems you're trying to help), the trust problem (Google's John Mueller compared llms.txt to the discredited keywords meta tag, and he's not entirely wrong), and the fragmentation issue (llms.txt, Content Signals, CC Signals, and IETF aipref are all trying to solve overlapping problems in incompatible ways).

LlmsTxtKit is the C# library that started this whole journey. It parses, fetches, validates, caches, and generates context from llms.txt files. It also ships as an MCP server (that's the Model Context Protocol from Anthropic) so AI agents can use it as a tool directly. The design is documentation-first (obviously), and the library handles the WAF-blocking problem gracefully instead of just throwing an exception and calling it a day.

The Context Collapse Mitigation Benchmark is the empirical study asking the question that actually matters: does any of this make a difference? If you feed an LLM curated Markdown via llms.txt instead of raw HTML scraped from the web, does it give better answers? I'm running a controlled experiment with paired conditions across 30–50 websites, scoring factual accuracy, hallucination rates, and citation fidelity. If the answer is "no, it doesn't matter," that's a valid and publishable finding. Science doesn't care about your hypothesis.

And yes, I wrote the research proposal, the roadmap, the paper outline, and the benchmark methodology spec before writing any experiment code. The docs-first affliction is real.

What This Blog Is Now

If you read the earlier posts on this site, you'll notice they were... let's call them "warming up." A welcome post. A Docusaurus setup walkthrough. Perfectly fine content, but not exactly what keeps someone's RSS feed interesting.

Going forward, this blog is where all of this work gets published. The llms.txt research has a dedicated eight-post series planned, starting with the WAF story (because it's a genuinely good story and I have opinions), moving through the research findings, the .NET ecosystem gap, GEO practices, and eventually the benchmark results.

But it's not going to be all llms.txt, all the time. I have posts planned about FractalRecall's metadata-as-DNA approach to embeddings, about what building a dungeon crawler teaches you about state machines (more than you'd expect), about why enterprise AI tools keep failing technical writers, and about the general experience of being a C# developer in a Python-dominated AI ecosystem. It's lonely out here. We have great pattern matching and nobody to share it with.

The common thread across all of it is the docs-first philosophy: understand the problem deeply, write it down clearly, and then build something worth building. Every project I work on starts with documentation that could stand on its own, because if you can't explain what you're building and why, you probably shouldn't be building it.

Before You Go

If any of this resonates (the documentation-first approach, the C# advocacy, the "wait, firewalls block the thing they're supposed to serve?" reaction) stick around. Subscribe to the RSS feed if that's your thing. The llms.txt research is ongoing, the tools are taking shape, and I guarantee the benchmark results are going to make at least one person on LinkedIn very uncomfortable.

And if you're a .NET developer who's been quietly wondering why the entire AI ecosystem seems to pretend C# doesn't exist: you're not alone. Pull up a chair. We have work to do.