The 844,000 Sites That Weren't: How an AI Adoption Stat Fell Apart Under Scrutiny

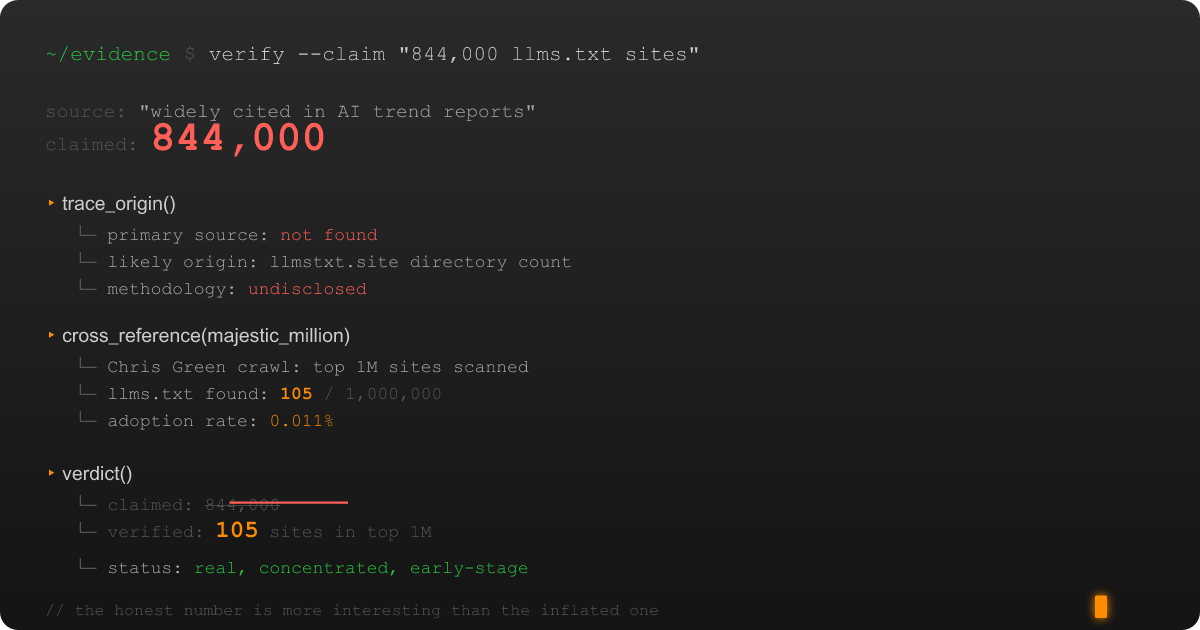

I need to tell you about a number. It's a number that shows up in blog posts and LinkedIn threads and conference talks and those AI trend reports that get passed around Slack channels like contraband. The number is 844,000, and it refers to the number of websites that have supposedly adopted the llms.txt standard.

I encountered this number while building the evidence inventory for an analytical paper about llms.txt (the Markdown-based content discovery format proposed by Jeremy Howard in September 2024). Because I am the kind of person who builds evidence inventories before writing papers, the kind of person who catalogs every factual claim and traces it back to a primary source before committing a single sentence to a draft, I decided to verify it.

I should not have done this on a weeknight. The verification process involved what I can only describe as the five stages of grief, but for statistics.

Stage 1: Acceptance (Premature)

The claim seemed straightforward enough. The llms.txt ecosystem has been growing since the spec launched in late 2024. Community directories exist. Big-name companies like Anthropic, Cloudflare, Stripe, and Vercel have published llms.txt files. There's momentum. There's energy. There's a community of people who genuinely believe in the standard's potential — and I count myself among them, given that I'm building tools for it, publishing an llms.txt file on this site, and writing an analytical paper about its ecosystem. Eight hundred forty-four thousand sounded high, sure, but maybe not impossibly high. The internet is a big place. Maybe I just underestimated how quickly a good idea spreads.

So I went looking for the primary source. You know, the actual methodology, the crawl data, the published study that counted these files and arrived at precisely "844,000+."

I'm still looking.

Stage 2: Bargaining

Let me be precise about what I found, because precision matters when you're about to tell an entire community that one of their most-cited statistics might not mean what they think it means.

The community directories linked from the official llms.txt specification? One lists approximately 784 entries. The other lists about 684. Not 784,000. Seven hundred and eighty-four. There's overlap between the two, so even the most generous union wouldn't break into four digits.

"Okay," I told myself, because I am nothing if not willing to give a number the benefit of the doubt before I start writing its obituary, "maybe the 844K figure comes from a broader crawl. Maybe someone actually scanned the internet."

Someone did. Rankability's 2025 adoption report crawled approximately 300,000 domains and found about 10% had llms.txt files. That's 30,000 — genuinely impressive, and also roughly 814,000 fewer than the claim. But as I covered in Part 2, that 10% depends entirely on which domains you crawl. A sample skewed toward developer documentation and tech companies will find higher adoption rates. Samples are not populations, and extrapolating from a self-selecting sample to "the internet" is the statistical equivalent of surveying a Star Trek convention and concluding that 94% of humans own Vulcan ears.

Stage 3: Depression

Then I pulled up the data that made me close my laptop and go make coffee.

Chris Green's Majestic Million analysis — the same dataset I examined in Part 2 — found exactly 105 sites with llms.txt files out of the top million websites by traffic. Among the top 1,000? Zero. Not "nearly zero." Not "a few early adopters." Zero.

Let me put those numbers side by side, because I think the contrast is worth sitting with. The claim floating through AI discourse is 844,000+ sites. The verifiable reality: 784 directory listings, 105 files in the top million, and zero in the top thousand. That's a gap between narrative and evidence that you could park several data centers inside.

Stage 4: Anger (Constructive)

I should note the irony: I'm spending my evenings building a .NET library, a validation framework, and an analytical paper for this standard. If I didn't believe in llms.txt's potential, I would not be deep enough in its ecosystem to notice that a number was wrong. The anger here isn't directed at the community. It's directed at the gap between what the community deserves — honest, defensible data — and what it's been working with.

Now, I want to be clear: I'm not accusing anyone of deliberately fabricating a number. I wasn't able to trace the 844K figure to a single origin point with a clear methodology, which means it may have emerged from some combination of misinterpretation, aggregation errors, optimistic extrapolation, and the telephone game that happens when secondary sources cite tertiary sources citing a blog post that cited a tweet that cited a conference slide that cited vibes.

This happens in every fast-moving technology space. A number gets attached to a narrative, the narrative gets repeated, and eventually the number achieves a kind of undead immortality where it shambles through LinkedIn posts and analyst reports long after anyone remembers where it came from or whether it was ever verified. I've seen it happen with cloud adoption rates, container usage figures, and the perennial claim that "X% of digital transformations fail" (the origin of that statistic is a Dilbert comic, and I am not joking).

What makes the 844K figure specifically worth examining is that it reinforces a particular narrative: that llms.txt has already achieved significant mainstream adoption, that the standard is gaining real traction across the web, and that the ecosystem is mature enough to justify building tools and workflows around it.

The real data tells a different story, and it's actually a more interesting story.

Stage 5: Acceptance (Actual)

Here's what the verified adoption data actually shows, and why I think it's more compelling than the inflated number ever was.

As I documented in Part 2, llms.txt adoption is real but extremely concentrated. The biggest names — Anthropic, Cloudflare, Stripe, Vercel, Coinbase — have impressive implementations. Anthropic's llms.txt alone is 8,364 tokens. Their llms-full.txt is 481,349. These are serious, curated documents maintained by organizations that understand the standard's value proposition.

But outside the developer-tools bubble? The standard hasn't crossed over. Healthcare, education, government, news, e-commerce — the sectors that represent the vast majority of the web haven't adopted llms.txt, and even if they did today, the infrastructure paradox means their WAF would probably block the AI crawlers anyway.

This is the story the honest numbers tell: llms.txt is a young standard in its earliest adoption phase, concentrated among the developer-documentation community that understands its value proposition best. That's not a failure. That's how standards work. RSS, Markdown itself, robots.txt — they all started as niche tools adopted by the people closest to the problem before reaching broader audiences. But it's a very different narrative from "844,000 sites and growing," and the decisions people make about whether to invest in llms.txt should be based on verified reality, not on a number that dissolves under scrutiny like sugar in hot coffee.

Why I'm Publishing This

I write about llms.txt because I think the standard has genuine potential. I'm building tools for it. I've spent weeks researching its ecosystem. I published an llms.txt file on this very site. I am not a detractor. I am, if anything, an overly enthusiastic supporter who happens to also be the kind of person who fact-checks his own enthusiasm before publishing it.

And that's exactly why the number matters. If the llms.txt community wants to be taken seriously by the broader web ecosystem (by CMS platforms, by hosting providers, by the CDN companies whose WAFs are blocking their standard), they need to lead with honest data. The corrected numbers, roughly 784 directory entries, 105 in the Majestic Million, zero in the top 1,000, tell a story of a young standard in its earliest adoption phase. That story is honest, defensible, and a perfectly fine foundation for an argument that the standard deserves investment and attention.

The 844K number, by contrast, tells a story that crumbles under the first hard question anyone asks. And in a space where trust and credibility are already in short supply (Google's John Mueller compared llms.txt to the discredited keywords meta tag, remember), the last thing the community deserves is to have its credibility anchored to a number that can't survive peer review.

What I Learned About Verification

The 844K number wasn't the only claim that fell apart when I checked it. Before I wrote a single paragraph of the analytical paper behind this series, I built an evidence inventory — every factual assertion mapped to a primary source, every source independently verified. The 844K figure was the most visible casualty, but it wasn't the only one.

The full story of what that inventory caught, and why I think every technical writer should build one before they publish anything with factual claims, deserves its own post. That's next.

The Real Adoption Picture

So where does llms.txt adoption actually stand? I covered the full dataset in Part 2. The short version: the scale is measured in hundreds of directory listings and a fraction of a percent of top websites, not hundreds of thousands. The trajectory is promising — Anthropic, Cloudflare, Stripe, and Vercel don't adopt formats on a whim — but the distribution is concentrated among early believers rather than spanning the broader web. That's where every good standard starts. It's just not where the 844K number claims it already is.

I'll keep watching the numbers. I'll keep verifying them. And if the day comes when 844,000 sites genuinely have llms.txt files, I'll be the first to write the correction to this correction.

I just want to see the primary source first.