The llms.txt Access Paradox: The Data Nobody Wants to Hear

In Part 1, I told the story of discovering that my own hosting infrastructure was blocking AI crawlers from reading the llms.txt file I'd specifically published for them. A Web Application Firewall (WAF), the security layer that inspects every inbound HTTP request, can't tell the difference between "AI system reading curated content as intended" and "malicious bot probing endpoints for vulnerabilities," and the result is a paradox that would be hilarious if it weren't also my actual production environment.

That was the personal version, the "I discovered this at 11 PM and said words I can't publish on a professional blog" version. This is the systemic version. The one where I pull at the thread and the whole sweater starts to unravel.

Because once I started asking "how widespread is this?", the answers didn't just confirm the WAF problem. They complicated the entire premise of what llms.txt is supposed to do. And I mean the entire premise.

llms.txt Adoption: What the Numbers Actually Show

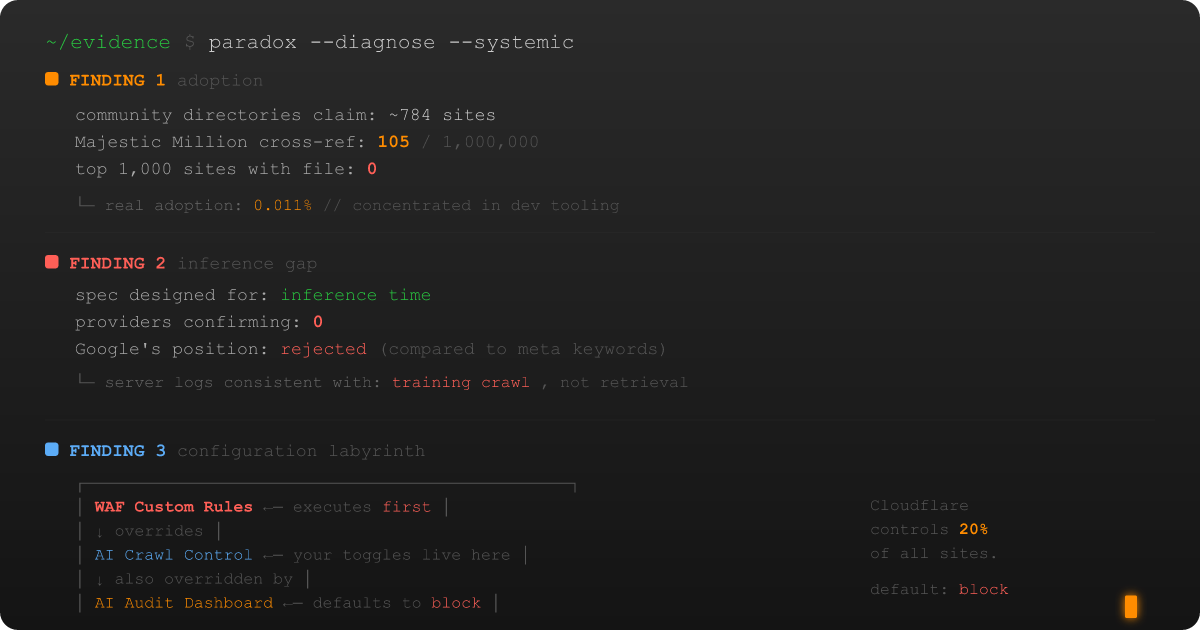

The adoption picture for llms.txt (the Markdown-based content discovery format proposed by Jeremy Howard in September 2024) is more complicated than the community directories suggest.

Community directories list hundreds of implementations. llmstxt.site has roughly 784 entries, directory.llmstxt.cloud has around 684. A Rankability crawl of approximately 300,000 domains found that about 10% had llms.txt files. Ten percent! That sounds like a real standard gaining real traction, until you look at where those files are.

Cross-reference against the Majestic Million (the top one million websites ranked by traffic) and the picture sharpens dramatically. Chris Green's analysis found only 105 sites with llms.txt files as of May 2025. That's 0.011% of the top million. Among the top 1,000? Zero. Not "a few." Not "we're getting there." Zero.

The adoption is real, but it's overwhelmingly concentrated in developer documentation and tech companies. Stripe has one. Anthropic has one. If you're building AI tools for developers, you'll trip over llms.txt files. If you're building AI tools for literally anything else (healthcare, education, government, news, e-commerce) the standard hasn't crossed over yet. That's not unusual for an eighteen-month-old spec — it's the same trajectory that every developer-born standard follows. The early adopters are the people closest to the problem. Broader adoption follows if (and only if) the tooling and evidence catch up.

The Inference Gap: The Data That Surprised Me

The inference gap is the disconnect between how llms.txt was designed to be used and how AI systems actually interact with it, and it genuinely surprised me, despite the fact that I spend most of my free time reading specification documents for fun.

The llms.txt spec explicitly states that "our expectation is that llms.txt will mainly be useful for inference." Inference time. Real-time. When a user asks Claude or ChatGPT a question and the AI goes to fetch information to answer it. That's the entire value proposition: you curate your content so the AI can give better answers right now, not six months from now when the training data catches up.

But no major AI provider has publicly confirmed using llms.txt at inference time. Not one.

Google has explicitly rejected the standard. John Mueller of Google compared it to the discredited keywords meta tag in April 2025 (Search Engine Journal), and Gary Illyes stated there's no support at Search Central Live in July 2025 (Search Engine Land). Yoast's analysis found that GPTBot, ClaudeBot, and Google's AI crawlers don't routinely request llms.txt files. Their report also cited an unnamed hosting provider managing thousands of sites that observed zero GPTBot activity on llms.txt endpoints, though the provider wasn't identified, so the attribution chain is thin. Still: even taking that datapoint with appropriate caution, the pattern is consistent. The crawlers aren't showing up for the content that was written for them. That's not evidence of the inference-time usage the spec was designed for. It's a gap between the standard's vision and the ecosystem's current behavior — and it's worth understanding honestly, because the people investing effort in their llms.txt files deserve to know what the data actually shows.

There is evidence of AI crawlers visiting llms.txt files. Mintlify's analysis shows Microsoft and OpenAI crawlers accessing them, and developer Ray Martinez of Archer Education documented GPTBot pinging their llms.txt every 15 minutes with server log evidence. So someone is knocking. But crawling and inference-time usage are different things. Training-time data collection looks the same in server logs as real-time retrieval, and the evidence is far more consistent with "hoovering up data for the next model" than "using this to answer a user's question right now."

This means the site operators who are carefully crafting llms.txt files and fighting their WAF configurations to make those files accessible may be doing it for an audience that isn't actually showing up at the door. They're leaving the porch light on for guests who may have already eaten elsewhere.

Cloudflare AI Bot Settings: A Configuration Labyrinth

Cloudflare (which according to W3Techs sits in front of roughly 20% of all public websites) provides the tools to configure AI crawler access. They're just scattered across multiple dashboard panels with overlapping authority and an execution order that I can only describe as "designed by someone who wanted job security for the support team."

For the record, I did try to fix the WAF problem on my own site. I am nothing if not persistent, and I had already written a 386-line guide about WAF-AI interactions, so I felt confident. Reader, I was not prepared for what awaited me.

There's the AI Audit dashboard (Security > Bots > AI Scrapers and Crawlers), where you can toggle individual AI crawlers between "Blocked" and "Allowed." Cloudflare introduced the one-click opt-in AI blocking controls in July 2024 under the "AIndependence Day" banner. A year later, in July 2025, they escalated: AI bot blocking became the default on newly created domains. No opt-in required.

There's AI Crawl Control, which offers more granular categories: ai-train (training data collection), search (search engine use), and ai-input (inference-time access). You can set different policies for each category per crawler.

And then there's the WAF Custom Rules layer (Security > WAF > Custom Rules), where you can create path-based exceptions for specific user agents.

Here's the punchline: according to Cloudflare's own documentation, WAF custom rules execute before AI Crawl Control settings. So a security rule you wrote months ago (back when you were a younger, more innocent version of yourself who thought "block non-browser traffic" was a reasonable blanket policy) will intercept an AI crawler before Cloudflare's own AI-specific controls get a chance to say "actually, this one's allowed."

I know this because I sat in my Cloudflare dashboard at 11 PM on a Tuesday, testing and retesting with curl commands, watching the blocks persist, and wondering which of the overlapping security controls was winning the argument:

It was not my finest hour. But it was educational in the way that touching a hot stove is educational: you only need to learn it once, and you will remember it forever.

If you're in this situation yourself, I've documented the complete diagnostic and mitigation workflow in Why AI Crawlers Get Blocked (and What You Can Do About It). That guide covers everything from curl-based diagnosis to WAF allowlist rules to edge-served workarounds. It's the practical document I wish I'd had when I started down this path.

The Systemic Problem: Why Fixing One Site Doesn't Fix llms.txt

The llms.txt Access Paradox isn't a configuration error on one site. It's a structural conflict between how the standard was designed and how the modern web's security infrastructure operates.

The llms.txt standard assumes a frictionless path between "file published" and "file consumed by AI." That assumption doesn't survive contact with the modern web's security infrastructure. Cloudflare alone sits in front of roughly 20% of all public websites. Akamai, AWS WAF, Fastly, Sucuri, Vercel: they all operate bot-detection systems that treat AI crawlers as threats by default. The burden of configuring exceptions falls entirely on the site operator, who has to understand WAF rule ordering, know that robots.txt and WAF policies operate independently, and realize that a 200 OK response might be lying to them.

That's not a scalable solution. That's hoping that every site operator who publishes an llms.txt file also happens to be the kind of person who reads Cloudflare documentation recreationally and finds joy in the phrase "WAF rule execution order." Some of us are. (Hi.) Most people have better things to do with their Tuesday nights.

The infrastructure needs to catch up to the intent. If the web wants AI systems to read curated content (and the existence of llms.txt, Content Signals, CC Signals, and a half-dozen other emerging standards suggests it does) then there needs to be a mechanism that's easier than "create a WAF custom rule that skips bot detection for specific paths and specific user agents and make sure it's ordered above all your other security rules." Something more like: if a site publishes an llms.txt file, that file should be accessible to the systems it was written for, without requiring the site operator to become a WAF expert.

We're not there yet.

What I'm Doing About It

This experience is why the WAF paradox has become the central finding of a longer analytical paper I'm writing about the state of the llms.txt ecosystem. The paper covers the inference gap (nobody's using it at inference time), the infrastructure paradox (your own security blocks it even when you want AI access), the trust architecture problem (no validation, no freshness guarantees, no consistency checks), and the standards fragmentation issue (too many overlapping standards, none of them complete).

It's also why LlmsTxtKit treats WAF blocking as a first-class engineering concern rather than an edge case. When the library's fetcher gets a 403 or a JavaScript challenge page, it doesn't just throw an HttpRequestException and leave you to figure out what happened. It classifies the failure, logs diagnostic context, checks for cached content, and returns a structured error that distinguishes "this resource doesn't exist" from "this resource exists but the infrastructure won't let me have it." Because that distinction matters, and because a meaningful percentage of the llms.txt files on the internet are in exactly this state. I wrote the error taxonomy at 2 AM after the Cloudflare incident. It has specificity.

And it's why I spent three hours on a Tuesday night arguing with a Cloudflare dashboard instead of writing C# code, which is what I actually enjoy doing, because sometimes the documentation-first developer has to do fieldwork and the field is a web dashboard with more tabs than a browser window at finals week.

Key Takeaways

- llms.txt adoption is real but narrow. Community directories list hundreds of sites, but Chris Green's Majestic Million analysis found only 105 out of the top million by traffic (0.011%), and zero in the top 1,000. Adoption is concentrated almost entirely in developer documentation.

- No major AI provider has confirmed inference-time usage. Google explicitly rejected the standard (Mueller, April 2025; Illyes, July 2025). Server log evidence is contradictory and more consistent with training-time crawling than real-time retrieval. The spec was designed for inference; the data doesn't show that happening.

- Cloudflare's AI controls conflict with each other. WAF custom rules execute before AI Crawl Control settings, meaning a security rule can override Cloudflare's own AI-specific toggles. Configuring this correctly requires understanding rule execution order that most site operators never encounter.

- This is a structural problem, not a configuration problem. The llms.txt standard assumes frictionless access. The modern web's security infrastructure provides anything but. The burden of making llms.txt work falls entirely on site operators who have to become WAF experts to serve a Markdown file.

- The most impactful action isn't adding llms.txt; it's reviewing your WAF configuration. The infrastructure paradox affects whether any AI system can access any content on your site, llms.txt or otherwise.

Where This Goes From Here

If you're a site operator who has published an llms.txt file, I'd encourage you to actually test whether AI crawlers can reach it. The diagnostic guide walks you through it. It takes about five minutes with curl. You might be surprised by what you find. I certainly was.

While researching the adoption numbers for this post, I kept running into one figure that didn't add up: a claim that 844,000+ websites had adopted llms.txt. It shows up in blog posts, LinkedIn threads, and conference slides. I tried to trace it back to a primary source. What I found instead was a cautionary tale about how numbers achieve a kind of undead immortality in AI discourse — and why the real adoption data, though smaller, tells a more honest and more interesting story. That's Part 3.

And that verification process? It taught me something about the difference between having sources and having verified sources that changed how I approach every factual claim I write. But that's a methodology story, not a firewall story, and it deserves its own space.

The firewall story is where the Access Paradox starts, because this is where theory meets infrastructure and infrastructure wins. Every time.