The llms.txt Access Paradox: The Data Nobody Wants to Hear

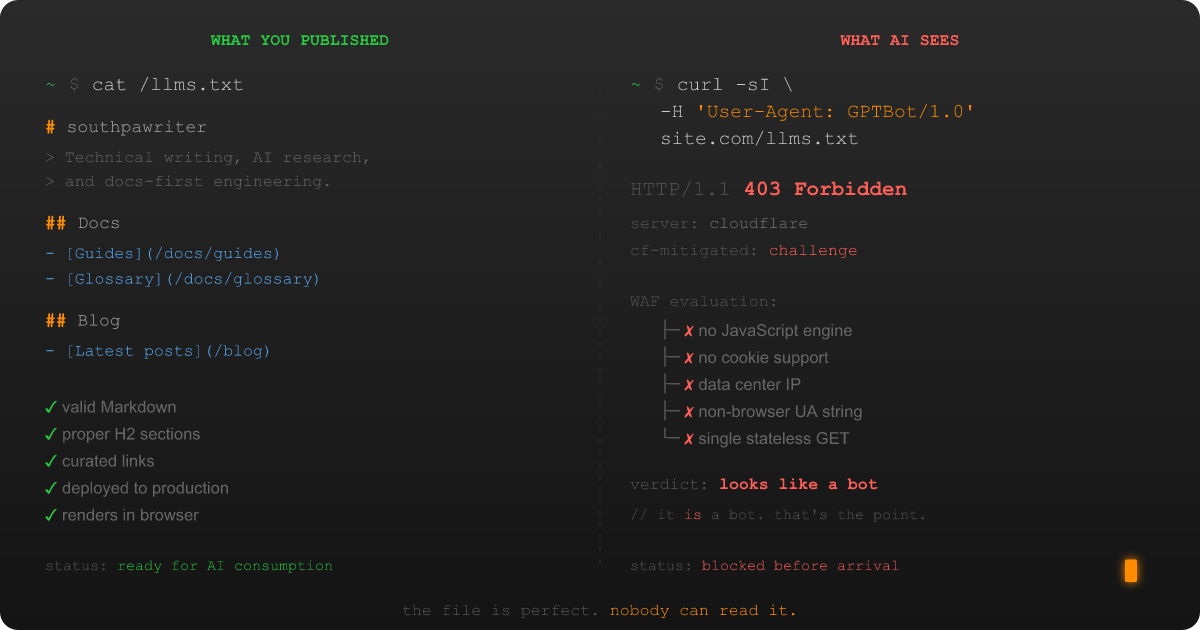

In Part 1, I told the story of discovering that my own hosting infrastructure was blocking AI crawlers from reading the llms.txt file I'd specifically published for them. A Web Application Firewall (WAF), the security layer that inspects every inbound HTTP request, can't tell the difference between "AI system reading curated content as intended" and "malicious bot probing endpoints for vulnerabilities," and the result is a paradox that would be hilarious if it weren't also my actual production environment.

That was the personal version, the "I discovered this at 11 PM and said words I can't publish on a professional blog" version. This is the systemic version. The one where I pull at the thread and the whole sweater starts to unravel.

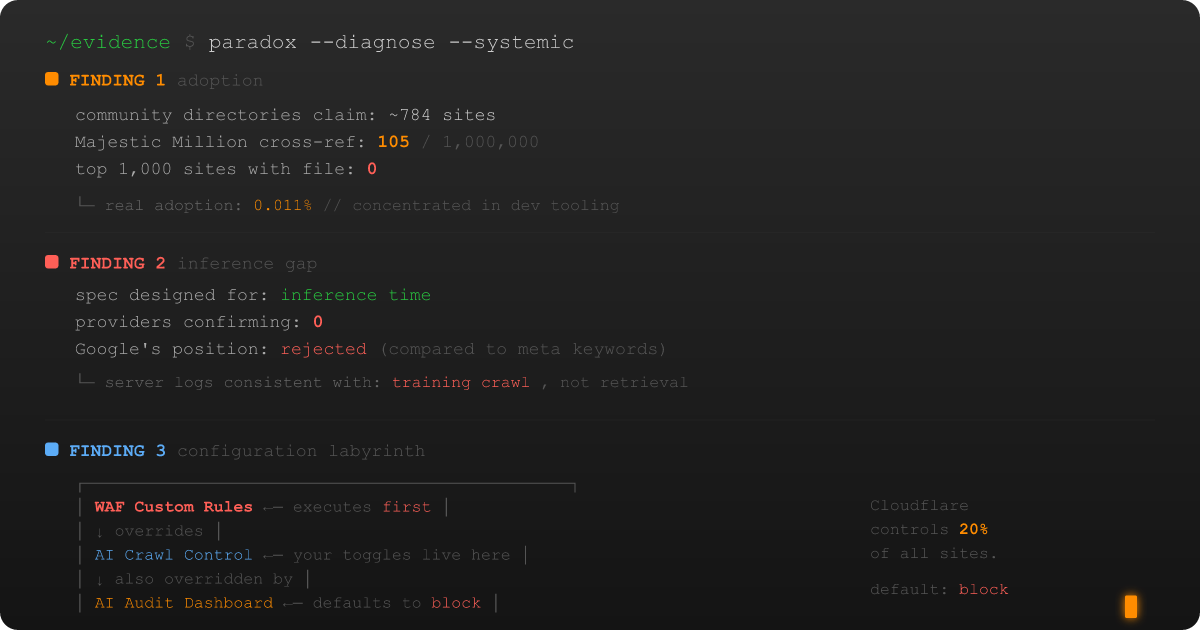

Because once I started asking "how widespread is this?", the answers didn't just confirm the WAF problem. They complicated the entire premise of what llms.txt is supposed to do. And I mean the entire premise.